We have seen in the previous post how to initiate a reengineering with SonarQube, starting with a functional redistribution and a re-design of our application.

We have seen in the previous post how to initiate a reengineering with SonarQube, starting with a functional redistribution and a re-design of our application.

Now we can go down a little deeper into the code to identify flows of treatments that would be good candidates for a restructuring.

Restructuring treatments

Once again, I will use the SQALE Sunburst to navigate between the different violations of programmation best practices and look at any indication of ‘spaghetti code’.

Figure 1 – Refactoring ‘goto’

This widget allows me to quantify the effort of refactoring – 158.8 days – in order to correct such ruptures of treatments and these algorithms jumps going in all directions.

This widget allows me to quantify the effort of refactoring – 158.8 days – in order to correct such ruptures of treatments and these algorithms jumps going in all directions.

I can do the same for each of the defects of this kind, for example the use of ‘continue’. A click then brings me to the list of programs with these violations:

Figure 2 – List of violations ‘continue’

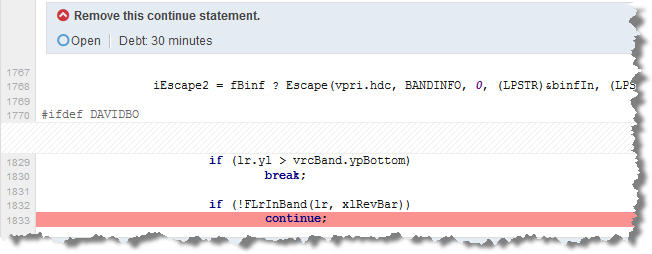

From there, I can go down into each program and verify this code, for each line having one of these violations. In the following figure, we can see a line of code with the statement ‘continue’ and the ‘break’ that accompanies it, and the estimated technical debt – 30 mn – to solve this spaghetti code.

Figure 3 – Line with a code violation and estimation of the resolution time

We can see from this example how to use SonarQube to go directly to the point:

- A general estimate through the SQALE plugin of the workload of refactoring flows of treatments.

- A more accurate estimate, at least for the ‘monster’ components, the most complex ones that will weigh the most on the overall effort of reengineering.

The project team can drill-down in each program, as we have done in this example, to assess unstructured code and rewrite it, in accordance with the functional logic but by modifying the algorithmic logic. The solution to the ‘goto’, ‘break’ and ‘continue’ is simple: use ‘if..else. However, be careful not to have too nested conditional structures.

Each language has its own peculiarities when it comes to flows of treatments and algoritmical structures, and bad practices in this area will be different in C, in Cobol, or in ABAP. The important thing is to take into account this point, and we saw how SonarQube can help us.

Restructuring data

A reengineering of the treatments most often means also a reengineering of data. This is a whole subject in itself, one could write an entire book on this one theme. Quickly, some points that come to mind:

- Check if the application uses files, and if it would be possible to turn them into tables. When data are temporary, as a log of messages for example, we tend to put these data into files. However, you can always ask yourself if it would not be better to store this information in a database, as a ‘Insert’ in a table is (usually) faster than writing a line in a file. And in both cases it will be necessary to empty the file or the table, when the volume of data becomes too large.

- Identify characteristics of data type, size or precision that seem strange to you, or unusual and odd. Validate them with the users and take this opportunity to ask them if they have any requests in this domain.

- Check for shared data structures, search for components using the same tables or files. In this case, it is important to try to specialize components because multiple accesses on a table are a significant source of bugs and of higher maintenance costs. I have seen applications where a table was accessed in Insert/Update/Delete from over 80 different classes. Whenever a developer needed to achieve a treatment on the table, he was programming his own SQL queries without checking if there was not already a method that did this same treatment. As a result, changing the table structure required to verify and possibly modify all these classes. Imagine the investment needed for this task and the level of risk if you forget one of these methods!

- And while you’re at it, check that there is no redundant data.

- Same for data inconsistencies: a screen that displays a measure in km and another in meters, or in € and in K€, or in days and hours, or hours and minutes, etc.

- Take advantage of this opportunity to check if there are SQL defects in the code. Why all these ‘select *’, ‘select … into select’, these nested JOIN or on too much tables, etc. We saw many of these ‘Issues’ in our series on PL/SQL analysis with SonarQube.

If you have temporary tables dynamically built during a user session, this usually means very complex processes that it is not possible to adjust by ‘simple’ cursors or loops, like statistical aggregations. Check with the users the criticality of these treatments. We can not have the same application that has to display all the data of a customer in less than a second and at the same time dynamically correlates and aggregates all kinds of statistics. These are two different applications.

Miscellaneous

Check if the original application was properly commented and try to keep at least this level of documentation or even improve it.

Naming rules: if they do not exist, it’s time to define them for future developments with this new language. If they exist, make sure to update them, but based on the current standards, which also means checking your choices with other project teams, and ensure that everyone is using the same standards.

Use a code analysis tool to check regularly new violations to best practices of this new language, which are not necessarily the same as the ones of the previous language. Ok, a lot of defects in C exist in C++ but the reverse is not true.

And above all, don’t forget unit tests. We have already made an estimate of the workload and of possible action plans in this area.

Conclusion

We have not listed all the possible elements of an action plan for a reengineering, you can imagine many others. In fact, this will be very dependent on the project, application, technology, … There is no typical approach which would invariably ensure the success of the project, but there is a good chance that the points listed above improves the chances of success of your reengineering.

One last tip, think about the future migration of applications into the Cloud, thus decoupling certain kinds of treatment, RESTful APIs, stateless/stateful strategy, etc.

I think we will soon see more and more re-engineering of applications in order to put them into the cloud.

This article ends this long series about refactoring and reengineering of a legacy application. For the next few posts, I think I will upgrade my SonarQube installation and see what has changed for SAP and Cobol code.

See you soon.

This post is also available in Leer este articulo en castellano and Lire cet article en français.