In our previous post, we have presented Michael Feathers and his book « Working Effectively with Legacy Code » according to which the absence of unit tests is the determinant factor of a Legacy application.

In our previous post, we have presented Michael Feathers and his book « Working Effectively with Legacy Code » according to which the absence of unit tests is the determinant factor of a Legacy application.

He proposes the concept of characterization tests to understand the behavior of the application, in order to qualify what it actually does, which is not exactly the same than discover through the code what it is supposed to do.

So what about our Legacy application which does not already have unit tests? Can we adress one of our three scenarios – transfering the knowledge of the application to another team – with unit tests? Would it be easier, especially if we also have to think to the other two strategies to evaluate: refactoring and reengineering?

Characterization Tests

Michael Feathers recommends in his book to do tests designed not only to verify that the code is correct, but also and especially to characterize its behavior, that is to say, to find out what really does this code. This therefore presents the following advantages:

- Allow, if not the transfer, but at least some acquisition of knowledge of the application by a new team.

- Develop tests that will be valid for a future refactoring or reengineering as the behavior of our application should remain constant after this operation.

Michael Feathers recommends proceeding as follows:

- For a block of code to be tested / documented, write a test that you known it will fail.

- Start the test and record the expected answer returned by the code, thus corresponding to the expected behavior response.

- Add a test to reflect the correct behavior, returning a positive result.

- Repeat as many times as desired for this block of code.

The example given by Michael Feathers corresponds to Java code, and this can be different for C language, at least in the implementation of the tests, but it will depend in any case of how you want to proceed, especially if you use a testing framework, according to its possibilities and features. I will not expand this point: C/C++ developpers will know more and better than me on this subject and understand what it means.

Example of the RTFOUT function

The methodology recommended by Michael Feathers remains the same, whatever the technology (C, Java, etc.) and the tool(s) you will use. Let’s see an example with our application Opus Word 1.1a.

We know that the most complex function, with 355 points of CC (Cyclomatic Complexity) has also 2 063 LOCs (Line Of Code)!

A glance at the SonarQube dashboard tells me that this function is in a file with the same name: ‘Opus\RTFOUT.c’, and represents almost all of its code (there is another function with 2 points of CC), with 1 124 statements and a rate of 17.5% of comments.

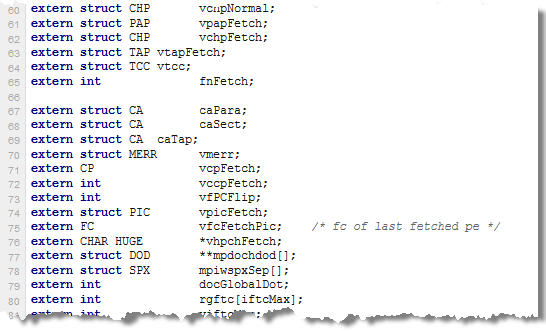

The first 200 lines are an accumulation of includes and variables with esoteric names, undocumented or not correctly commented:

The first 200 lines are an accumulation of includes and variables with esoteric names, undocumented or not correctly commented:

I won’t even try to understand anything.

I won’t even try to understand anything.

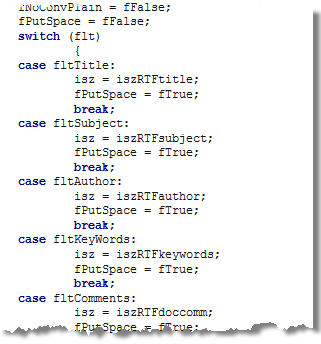

But after some nested ‘if .. else’, I find the following ‘switch’:

I quickly understand that we have here the function in charge of producing the RTF (Rich Text Format) corresponding to the text entered with Word, and this first ‘switch’ specify the font type – Modern, Roman, Swiss, etc. – used in this format. And the ‘fmc’ variable will manage these values.

I quickly understand that we have here the function in charge of producing the RTF (Rich Text Format) corresponding to the text entered with Word, and this first ‘switch’ specify the font type – Modern, Roman, Swiss, etc. – used in this format. And the ‘fmc’ variable will manage these values.

Then another ‘switch’, very long, manages the properties of the document, when available: title, subject, author, etc.

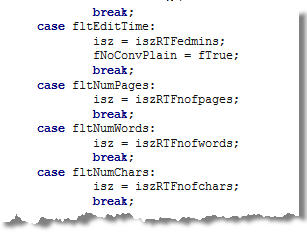

… the date of the last edition of the document, the number of pages, number of words or characters:

… the date of the last edition of the document, the number of pages, number of words or characters:

So I can already identify all blocks sufficiently simple and with code easy to read, in order to program characterization tests, one for each possible value encountered in a conditional structure (‘end .. if’, ‘switch’) or loop (which also needs a condition). In this case, we can write one or more tests to verify existing values or incorrect ones.

So I can already identify all blocks sufficiently simple and with code easy to read, in order to program characterization tests, one for each possible value encountered in a conditional structure (‘end .. if’, ‘switch’) or loop (which also needs a condition). In this case, we can write one or more tests to verify existing values or incorrect ones.

For example, I will test the different possible states of the variable ‘fmc’ with the values that we can find in the code: ‘FF_ROMAN’, ‘FF_MODERN’ … or a nonexistent value ‘FF_WRONG’ to see how the application reacts.

Similarly for the variable ‘flt’ that manages the document properties: I can test all kinds of incorrect values to see again how behaves the application in this case. What’s happening for example if I make a test:

- With a number of pages equal to 999,999?

- With special characters (@, #,!, ¿, …) in the name of the author?

- With different formats for the date of the last update?

Of course, blocks of code, such as the one managing in memory the table of bookmarks in a Word document, will be too complex to understand and test properly without any help. But remember that the primary goal is not to understand what is supposed to make the application through its code, but to characterize its behavior.

I also found very quickly blocks of code that are repeated before each loop or each ‘switch’. I suppose they initialize variables before each treatment. I note to check the documentation (if there is one) or ask to the current team what are these variables. If they are repeated so often, it can have an impact in terms of design during a refactoring or reengineering operation.

NOTE: One problem with the C language is that certain objects are outsourced into includes or macros. As described by Michael Feathers in his book, it is quite possible to modify the code to create our own include with a call to these objects in order to test quickly if they are called with the right number and/or the right type of parameters. Please refer to Michael’s book if you have questions, I will not mention all of them in this post.

Another problem I encountered in the code: a lot of compiler directives #IFNDEF or for different platforms (Mac). Therefore to be taken into account during a reengineering.

Synthesis

The advantage of the approach advocated by Michael Feathers is not to focus on what the application is supposed to do, through its code, which can take a lot of times and sometimes be completely impossible, but to focus on what the application does really. Especially since the application does not always behave as it is supposed to.

You can quickly create characterization tests, at least on blocks of code with conditional structures (‘if .. else’, ‘switch’) or loops. Remember that each ‘path’ in these structures is a rule of business (or technical) logic and therefore should normally be covered by one or more corresponding tests.

Which raises the following question: how many characterization tests are necessary to ensure the transfer of knowledge of an application (one of our three scenarios)? What code coverage should these tests represent before starting a refactoring or a reengineering? Can we estimate the effort of characterization tests to be done? This is what we’ll see in our next post.

This post is also available in Leer este articulo en castellano and Lire cet article en français.