In the previous post, we have seen how to create our own PL/SQL Quality Profile activating all the 132 existing rules in the SonarQube default profile. Now, we can relaunch the analysis initiated earlier.

In the previous post, we have seen how to create our own PL/SQL Quality Profile activating all the 132 existing rules in the SonarQube default profile. Now, we can relaunch the analysis initiated earlier.

This way, I will be able to work with all the rules existing in the PL/SQL profile and select the ones that I want to use in order to create a PL/SQL dashboard for my demo environment.

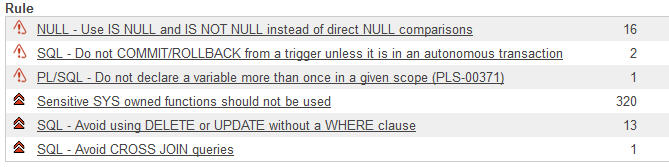

Blockers

At first, we can detect 16 violations of the rule ‘Use IS NULL and IS NOT NULL instead of direct NULL comparisons’. What is this about?

![]() A drill-down from the list of defects and SonarQube allows us to access the code presenting this bad practice.

A drill-down from the list of defects and SonarQube allows us to access the code presenting this bad practice.

We can see that the programmer performs a test on the variable ‘v_periodo’ in order to verify if this one is an empty string, because it has been initialized in this way, or because this value has been assigned to it somewhere in the algorithm. For example:

DECLARE name v_periodo varchar(10) := ''

The problem is that:

- Oracle considers an empty string as a NULL value. So as a result of the previous treatment, this variable does not contain an empty string, but a NULL value.

- Oracle makes the difference between an empty string and a NULL value. So the test:

IF v_periodo = ''

returns false as v_periodo is not an empty string, but a NULL value. The correct statement is:

IF v_periodo IS NULL

This is a good example of a ‘bad practice’ that will most certainly cause a failure in the application, since the condition tested (empty string) will never be possible, and therefore the corresponding business logic will never be executed.

And of course, we discover that this defect has been duplicated a few lines below:![]()

I think there must be somewhere a computer theorem or some variant of Murphy’s Law which states that the number of Copy-Paste of an incorrect instruction is proportional to its gravity. The more serious, the more reproduced.

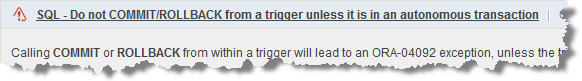

The other two ‘Blocker’ violations are quite clear and we just have to take a look at the SonarQube documentation to understand what is wrong and what are the consequences:

A COMMIT or a ROLLBACK statement in a trigger lead to an ORA-04902 error. It is necessary to use a PRAGMA (compiler directive) to declare an autonomous transaction in order to realize this. However, I would say that I tend to question any DML (Data Manipulation Language) instruction within a trigger.

A variable has been declared twice, leading to an Oracle PLS-03371 error. I especially appreciate that SonarQube indicates the 2 lines where this instruction is duplicated because it is one of the most difficult to investigate, especially in a program like this that has tens of thousands of line of code. This is common in older PL/SQL ‘Legacy’ applications.

What can we say about these 3 Blockers?

First, that their severity is completely justified:

- In the first case, a ‘malpractice’ programming leads to a logical error and a very possible bug.

- And the following two cases are bugs that will stop the application, which is not really what the users expect and that you want them to see.

These are therefore three rules for which no violation will be allowed: zero tolerance, these defects must be corrected immediately.

Second observation: these rules are known, judging by the low number of cases encountered. Nevertheless, it is difficult if not impossible to avoid that:

- A new member in the team, possibly not very experienced, does not not known the programming best practice. I bet this is the case of the first rule (use exclusively NULL / NOT NULL) and that the same programmer has used an incorrect syntax and then Copy/Pasted it everywhere.

- Even when everyone knows all the rules, the lack of attention is always possible. With just 1 or 2 defects for the next two rules, this is again probably what did happen.

It is easy to forget to declare a transaction or that a variable has already been declared a few tens (or even hundreds) of lines above. Fortunately, the SonarQube PL/SQL plugin allows us to verify and correct quickly and easily these defects. I especially appreciate to be able to go down to the line of code where the violation is to be found, validate it and decide for immediate remediation.

In the next post we will discuss the ‘Critical’. Again, Happy New Year and have a good week.

This post is also available in Leer este articulo en castellano and Lire cet article en français.